Plugin abandonware as a WordPress threat vector?

I run this site on a tiny VPS that would start crumbling if this site got, say 15 or more hits per second. Without caching at least. Now,…

I run this site on a tiny VPS that would start crumbling if this site got, say 15 or more hits per second. Without caching at least. Now,…

Do Kindly Internalize Mail (from me)?

I love too many online projects, content creators and the like to support them all. How to prioritise?

How do you do a “text box” block in WordPress when Gutenberg doesn’t include one?

But it is a trade-off between security and convenience

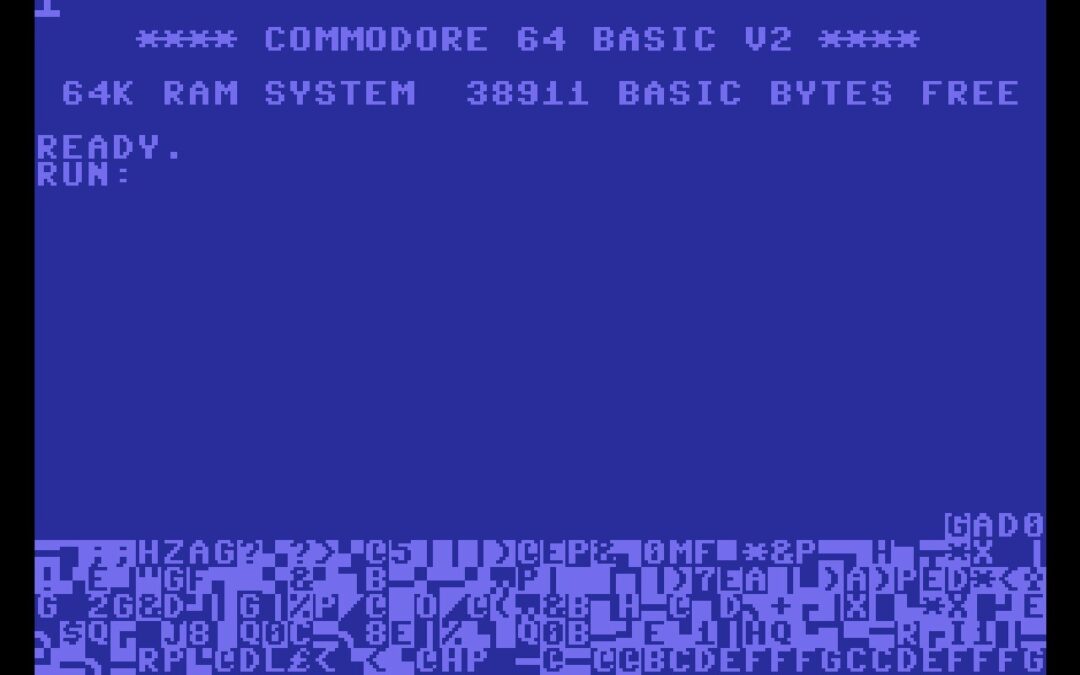

Emulating the oldest home computer on the newest one

A good smartphone is a boring smartphone.

Much like dancing instructors, Samba guides either overcomplicate things or just don’t explain themselves. Let’s find the joy in Samba.